Relevant Overviews

- Content Strategy

- Online Strategy

- Online Community Management

- Social Media Strategy

- Content Creation & Marketing

- Online Architecture

- Digital Transformation

- Innovation Strategy

- Surveillance Capitalism, Social media and Polarisation (Overview)

- Disinformation in the US 2020 elections

- Communications Tactics

- Psychology

- Social Web

- Media

- Politics

- Communications Strategy

- Science&Technology

- Business

Facebook’s own recommendation algorithms have been inviting users to like pages that share pro-military propaganda that violates the platform’s rules... in 2018 the social media company admitted it could have done more to prevent incitement of violence in the run-up to a military campaign against the Rohingya Muslim minority ...hired more than 100…

When changing their Newsfeed algorithm, Facebook's tests found "a dramatic impact on the reach of right-wing “junk sites,”... [by] January 2018 a second iteration dialed up the harm to progressive-leaning news organizations instead... Mother Jones was singled out as one that would suffer... Daily Wire was identified as one that would be…

"Facebook quietly stopped recommending that people join online groups dealing with political or social issues", and will "assess when to lift them afterwards, but they are temporary." This part of the Facebook's AI plays a key role in pushing "people down a path of radicalization... groups reinforce like-minded views …

Summary of peer-reviewed research - “Understanding Echo Chambers and Filter Bubbles: The Impact of Social Media on Diversification and Partisan Shifts in News Consumption” - by its authors, who found:more time spent on Facebook, the more polarized their online news consumption becomes...Facebook usage is five times more polarizing for conservative…

Facebook's 2017 newsfeed algorithm tweak intentionally reduced traffic to left-leaning outlets " to avoid adding fuel to critics’ argument that the platform has an anti-conservative bias"... and overcorrected."Mother Jones saw a roughly $400,000 drop in the site’s annual revenue"

"the right is better at connecting with people on a visceral level", according to Facebook, portraying themselves as a neutral mirror. Rightwing content resonates by "speaking to "an incredibly strong, primitive emotion" by touching on "nation, protection, the other, anger, fear.""This may be true, but it&#x…

Facebook is piloting a circuit breaker to stop viral spread of posts... might staunch the flow of dangerous incitement and misinformation ... America’s Frontline Doctors... pushed hydroxychloroquine ... Funded with dark money, promoted by influential accounts, advanced by algorithmic recommendations, and shared in large private Facebook groups... …

a new Al Jazeera investigation that identified 120 Facebook pages — with a total of over 800,000 likes ... Facebook frequently talks up its algorithm and moderators for catching a lot of hateful content — but ... some of these pages have been online for a decade.

Facebook is wary of being drawn further into a political argument ahead ... divisive presidential election ... ... steer clear of fact-checking political advertisements... keen not to antagonise Mr Trump ... claiming that social media platforms are biased against Republican ... purely a business decision ... ubiquitous... it must always align with…

Wylie ... the gay Canadian vegan who somehow ended up creating “Steve Bannon’s psychological warfare mindfuck tool”.... In 2014 Steve Bannon ... was Wylie’s boss. And Robert Mercer... Republican donor, was Cambridge Analytica’s investor... to bring big data and social media to an established military methodology – “information operations” – then t…

the capacity to spread ideas ... no longer limited by access to expensive... infrastructure. It’s limited instead by one’s ability to garner and distribute attention. And right now, the flow of the world’s attention is structured... by just a few digital platforms:... tincreasingly stand in for the public sphere itself... at their core... They’re …

discovering a filtering algorithm's existence in a curated feed influences user experience, but it remains unclear how users reason about the operation of these algorithms.... Interviews revealed 10 "folk theories' of automated curation... Users who were given a probe into the algorithm's operation via an interface ... visible …

In 2018, we will prioritize News from publications that the community rates as trustworthy... that people find informative... relevant to people’s local community... We surveyed ... people using Facebook across the US to gauge their familiarity with, and trust in, various different sources ...

The short-term, dopamine-driven feedback loops that we have created are destroying how society works....exploit[s] a vulnerability in human psychology” by creating a “social-validation feedback loop”... you don’t realize it, but you are being programmed

ProPublica published an in-depth investigation into the algorithms that Facebook uses to determine what it considers “hate speech” ...Facebook’s rules were being inconsistently applied... to learn more ... its first Facebook Messenger bot... to collect stories about people’s experiences with hate speech on Facebook... to help quantify the organiz…

Users will be able to mark stories as fake...algorithm will look at whether a large number of people are reporting a particular article, whether or not the article is going viral, and whether the article has a high rate of shares... create an algorithm-vetted set of links that then goes on to a team of researchers within Facebook... links are sent…

Not only is Facebook not providing little red warnings along with links to potentially specious news—it’s now blocking links to the plugin that did... . “It would seem I’ve caused them some embarrassment by showing them to be full of bull when it comes to their supposed inability to address fake news and they are punishing me for it.”... Update #…

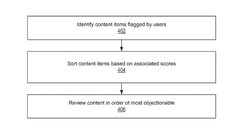

Facebook’s application for Patent 0350675: “systems and methods to identify objectionable content.” ... filed in June 2015, describes a sophisticated system for identifying inappropriate text and images and removing them from the network... improve the detection of pornography, hate speech, and bullying... much easier to identify than false news s…

There’s large-scale, statistically significant research into the impact of search results on political views... Google is doing a horrible, horrible job of delivering answers here. It can and should do better... people are finally saying, ‘Gee, Facebook and Google really have a lot of power’ like it’s this big revelation. And it’s like, ‘D’oh.’”…

Zuckerberg’s emerging dilemma. He doesn’t want Facebook ... to be an arbiter of what’s legit and what’s not. But if Facebook is now going to prohibit fake news sites from using its ad network to sell ads, it will need a list of its own... Google has long had its own list of legitimate news sources... hasn’t apparently wanted to cut into its own…

There’s bad information out there that’s not necessarily fake. It’s never as clear-cut as you think... Facebook’s algorithm may not understand the various shades of falsehood. Facebook could tweak its algorithm to promote related articles from sites like FactCheck.org so they show up next to questionable stories on the same topic in the news fee…

Facebook makes billions of editorial decisions every day. And often they are bad editorial decisions — steering people to sensational, one-sided, or just plain inaccurate stories. The fact that these decisions are being made by algorithms rather than human editors doesn’t make Facebook any less responsible for the harmful effect on its users and t…

Facebook wants to have the responsibility of a publisher but also to be seen as a neutral carrier of information that is not in the position of making news judgments... when Facebook was still censoring the napalm photo ... the site’s trending bar began posting ... a story suggesting September 11 were caused by a “controlled demolition”.

There is no such thing as neutrality when it comes to media. That has long been a fiction... It’s also dangerous to assume that the “solution” is to make sure that “both” sides of an argument are heard equally... It is even more dangerous, however, to think that relying more on algorithms will remove this bias.Recognizing bias and enabling process…

The filter bubble... has evolved. Algorithms, network effects, and zero-cost publishing are enabling crackpot theories to go viral... impacting the decisions of policy makers and shaping public opinion, whether they are verified or not... Facebook's news feed ... tailored just to us ... to keep us interested and happy... drives engagement and mor…

Facebook is not a friend of journalism.. Yes, Instant Articles are sexy and monetisable... a hugely important route to readers, but the more dependent we get on them, the more they’ll be able to charge us to access that audience... Facebook is seeing an alarming (to them) drop in sharing of personal information...we’ll see Facebook start to turn d…

Facebook’s news feed algorithm can be tweaked to make us happy or sad; it can expose us to new and challenging ideas or insulate us in ideological bubbles... The algorithm’s rankings correspond to the user’s preferences “sometimes,” Facebook acknowledges... not the success rate you might expect A glimpse into its inner workings sheds light ... on…

taking us towards what an American legal scholar, Frank Pasquale, has christened the “black box society”... subtitle – “the secret algorithms that control money and information” ... it’s not just about money and information but increasingly about most aspects of contemporary life... we know that Facebook algorithms can influence the moods and t…

the social network will share analytics, and Instant Articles is compatible with audience measurement and attribution tools... won't receive preferential treatment from Facebook's News Feed sorting algorithm... Facebook will parse HTML and RSS to display articles with fonts, layouts, and formats ... also providing vivid media options like embe…

Relevant Overviews

- Content Strategy

- Online Strategy

- Online Community Management

- Social Media Strategy

- Content Creation & Marketing

- Online Architecture

- Digital Transformation

- Innovation Strategy

- Surveillance Capitalism, Social media and Polarisation (Overview)

- Disinformation in the US 2020 elections

- Communications Tactics

- Psychology

- Social Web

- Media

- Politics

- Communications Strategy

- Science&Technology

- Business